DCitizens

We investigate how technology can empower citizens and non-state actors to take an active role in shaping agendas.

Games for Inclusion

We explore how to create inclusive environments and behaviours with and through games.

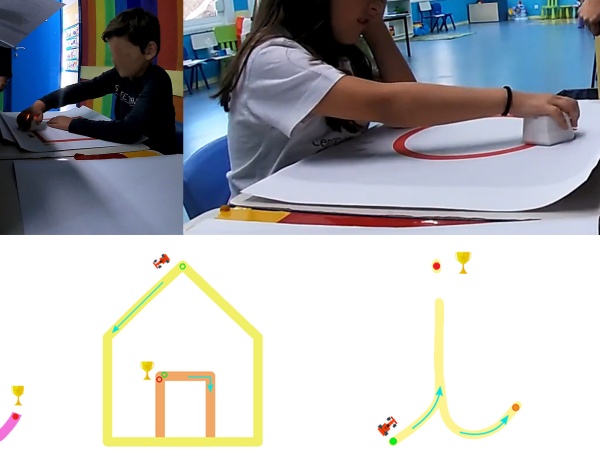

Inclusive Computational Thinking

We investigate the use of tangible systems to promote computational thinking skills in mixed-ability children.

Social Robots in Inclusive Classrooms

We investigate the use of social robots to create inclusive mix-visual ability classrooms.

AVATAR

AVATAR proposes creating a signing 3D avatar able to synthesize Portuguese Sign Language.

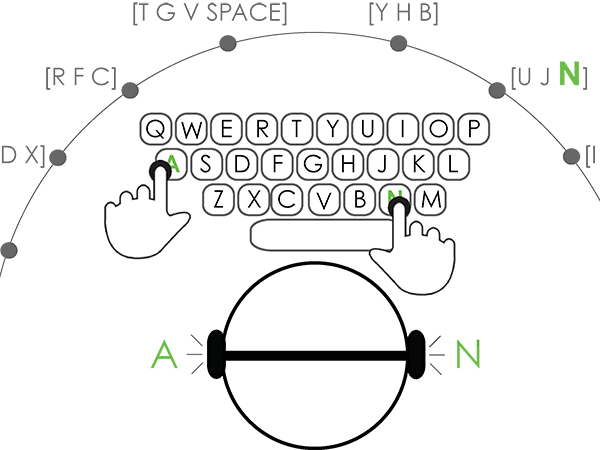

Non-visual Mobile Text-Entry

We are creating novel non-visual input methods to multiple form-factors: from tablets to smartwatches.

ARCADE

ARCADE proposes leveraging interactive and digital technologies to create context-aware workspaces to improve physical rehabilitation practices.

Accessibility in the Wild

In this project, we are creating the tools to characterize user performance in the wild and improve current everyday devices and interfaces.

Nonvisual Word Completion

We investigate novel interfaces and interaction techniques for nonvisual word completion. We are particularly interested in quantifying the benefits and costs of such new solutions.

Multi-point Vibrotactile Feedback

As touchscreens have evolved to provide multitouch capabilities, we are exploring new multi-point feedback solutions.

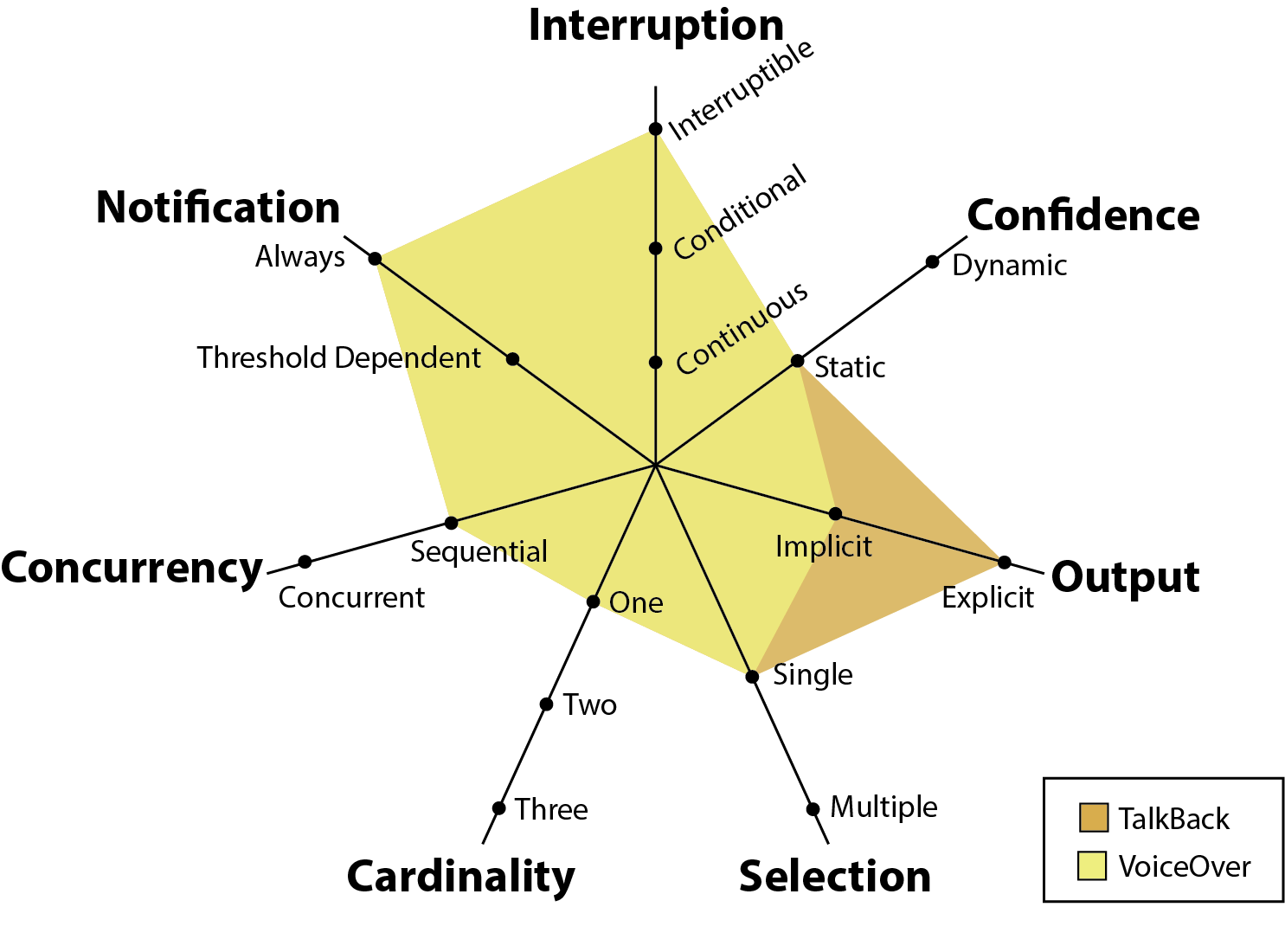

Applications for Concurrent Speech

In this research work, we are investigating novel interactive applications that leverage the use of concurrent speech to improve users' experiences.

Accessible Classrooms

This research leverages mobile and wearable technologies to improve classroom accessibility for Deaf and Hard of Hearing college students.

Motor-Impaired and Touchscreens

Our goal is to thoroughly study mobile touchscreen interfaces, their characteristics and parameterizations, thus providing the tools for informed interface design.

Braille 21

Braille 21 is an umbrella term for a series of research projects that aim to bring Braille to the 21st century. Our goal is to facilitate access to Braille in the new digital era.

Disabled 'R' All

We aim to understand the overlap of problems faced by health and situational impaired users when using their mobile devices and design solutions for both user groups.